Category: Wikipedia

-

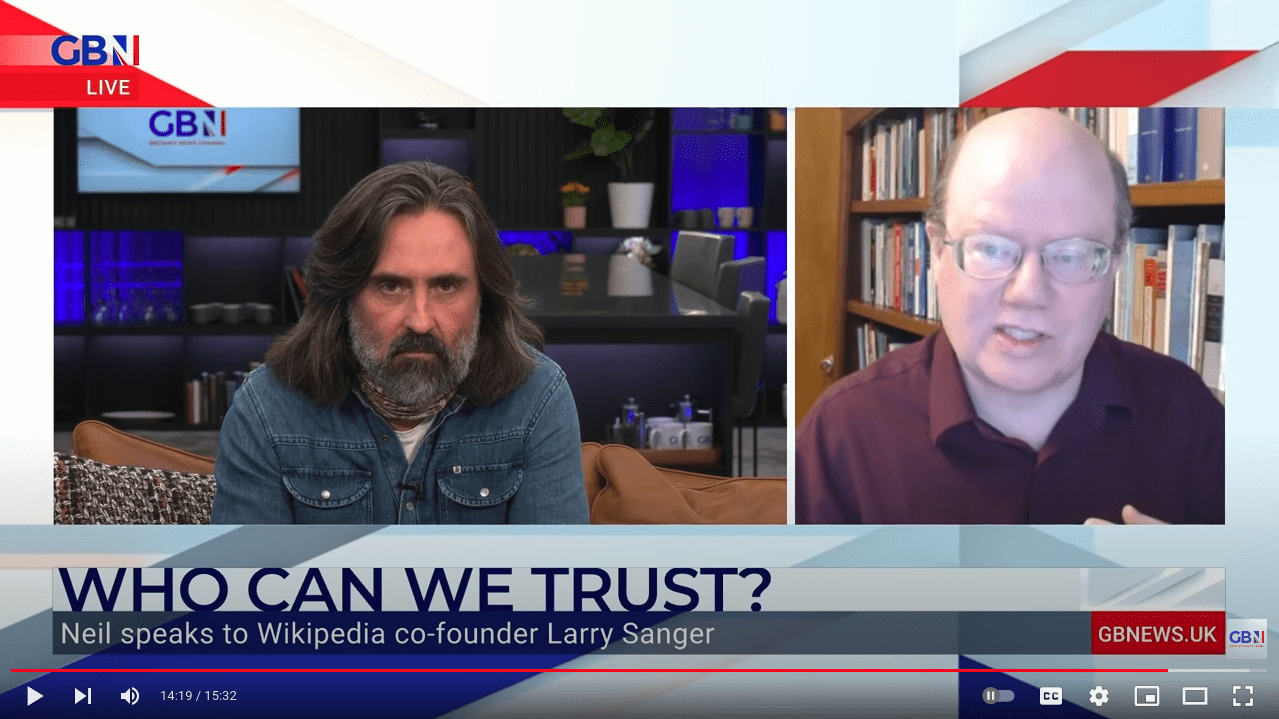

Wikipedia Criticism With a Scottish Accent

Neil Oliver is an interesting cultural and political commentator from Scotland. I sat down with him last weekend. It was fun, although frankly Neil’s accent is so heavy that I occasionally had to

-

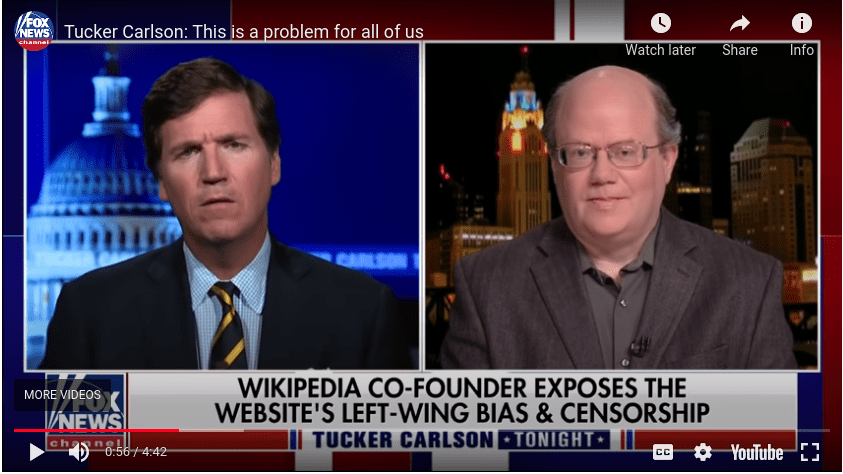

I apologize for Wikipedia to Tucker Carlson

Nice of Tucker to invite me on his program to talk about issues with Wikipedia circa 2021. This was the second time he invited me on, out of the blue. I’m very grateful

-

Unherd opinions

I frankly had no idea that this Unherd interview would blow up the way it did. Not only did the interview itself get a lot of traffic, it ended up being shared and

-

Wikipedia Is More One-Sided Than Ever

“All encyclopedic content on Wikipedia,” declares a policy page, “must be written from a neutral point of view (NPOV).” This is essential policy, believe it or not. Maybe that will be hard to

-

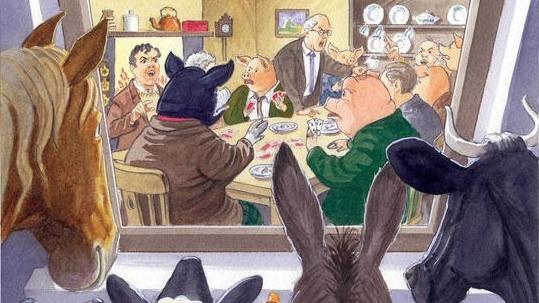

Wikipedia Is Badly Biased

Wikipedia’s “NPOV” is dead. The original policy long since forgotten, Wikipedia no longer has an effective neutrality policy. There is a rewritten policy, but it endorses the utterly bankrupt canard that journalists should avoid what they call “false balance.”

-

EU copyright reform could threaten wiki encyclopedias

If we are to believe its critics, under the pending EU copyright reform legislation, the EU would implement a “link tax” across all of Europe. So if you link to a news article,

-

Some thoughts, 15 years after Wikipedia’s launch

It’s been 15 years since I announced the opening of the new Wikipedia.com site, with a little message that said: http://www.wikipedia.com/ Humor me. Go there and add a little article. It will take all

-

Let’s try out “Golden Filter Premium” on Wikipedia, shall we?

I encountered a journalist-activist on Twitter, a writer for (among others) Al Jazeera in English, who is nevertheless a free speech activist. We discussed the recent FoxNews.com article that reported, among other things, that